# can be idle in the pool before it is invalidated. # The SqlAlchemy pool recycle is the number of seconds a connection # no limit will be placed on the total number of concurrent connections. # max_overflow can be set to -1 to indicate no overflow limit # and the total number of "sleeping" connections the pool will allow is pool_size. # It follows then that the total number of simultaneous connections the pool will allow is pool_size + max_overflow, # When those additional connections are returned to the pool, they are disconnected and discarded. # additional connections will be returned up to this limit. # When the number of checked-out connections reaches the size set in pool_size, # The SqlAlchemy pool size is the maximum number of database connections # If SqlAlchemy should pool database connections. Sql_alchemy_conn = postgresql+psycopg2://localhost:5436/airflow_db?user=svc_etl&password= #! this needs to change to MySQL or Postgres database

#sql_alchemy_conn = sqlite:////home/airflow/airflow.db # SqlAlchemy supports many different database engine, more information # The SqlAlchemy connection string to the metadata database. # SequentialExecutor, LocalExecutor, CeleryExecutor, DaskExecutor, KubernetesExecutor # The executor class that airflow should use. # can be utc (default), system, or any IANA timezone string (e.g. # Default timezone in case supplied date times are naive # If using IP address as hostname is preferred, use value ":get_host_ip_address" # No argument should be required in the function specified. # default value "socket:getfqdn" means that result from getfqdn() of "socket" package will be used as hostname # Hostname by providing a path to a callable, which will resolve the hostname logĭag_processor_manager_log_location = /home/airflow/logs/dag_processor_manager/dag_processor_manager.log # Colour the logs when the controlling terminal is a TTY.Ĭolored_log_format =. # logging_config_class = my.fault_local_settings.LOGGING_CONFIG # This class has to be on the python classpath # Specify the class that will specify the logging configuration If remote_logging is set to true, see UPDATING.md for additional # Users must supply an Airflow connection id that provides access to the storage # Airflow can store logs remotely in AWS S3, Google Cloud Storage or Elastic Search. # > writing logs to the GIT main folder is a very bad idea and needs to change soon!!!īase_log_folder = /ABTEILUNG/DWH/airflow/logs # The folder where airflow should store its log files # The folder where your airflow pipelines live, most likely aĭags_folder = /ABTEILUNG/DWH/airflow/dags How to find out which component of Airflow / Postgres generates this behaviour?.Log and DAG folders for both servers are at different locations and separated from each other. DAGs run successfully on a different Airflow test server which is sequential execution only.Manual executions can lead (depending on DAG definition) to same behaviour.Clearing the error lead to successful execution of first task, then after further Clears the first and subsequent tasks succeed (and write task logs). Scheduled tasks will still show up as failed in GUI and in database. Restart Airflow scheduler, copy DAGs back to folders and wait. Stop Airflow scheduler, delete all DAGs and their logs from folders and in database.No errors appear in the Airflow scheduler log nor are there logs from the tasks themselves. These tasks can be either complete modules or individual functions. Any further use of Clear leads to execution of subsequent tasks. In some cases using the option Clear in the Tree View of the DAG results in successful execution of the first task.

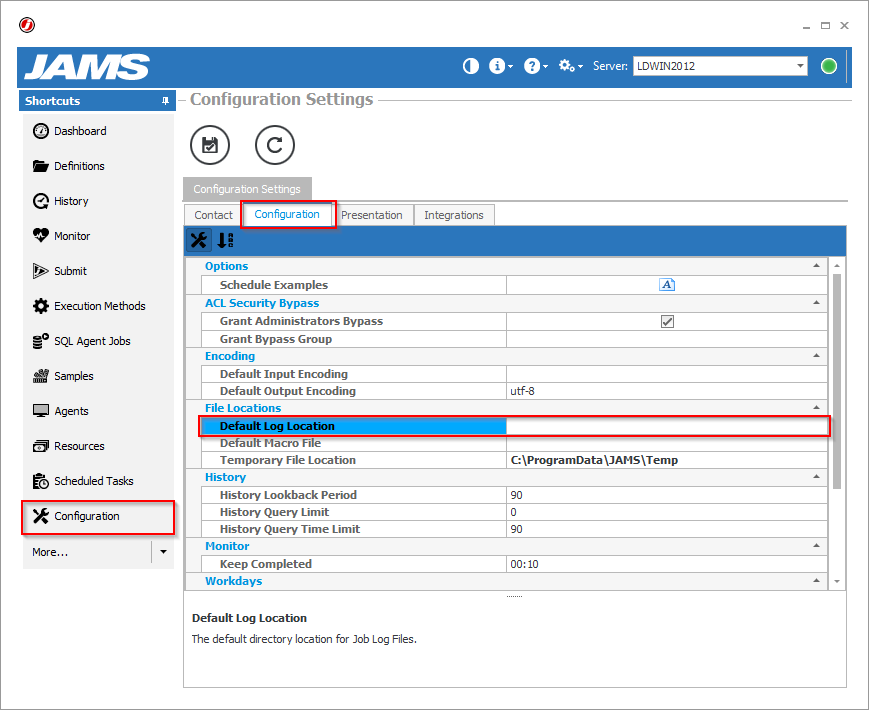

Schedules on DAGs are not executed and end without log in failed state. This setup is due to budgetary constraints.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed